The Problem This Solves

Most enterprise LLM pilots fail for predictable reasons. The model does not know private business context, retrieval quality is uneven, prompts become brittle, and teams cannot explain why one answer is reliable while another is not. The gap between a chatbot demo and a production AI system is usually data engineering, evaluation, and operational discipline.

Fine-tuning and RAG solve different problems. Retrieval brings current, source-grounded context into the model. Fine-tuning changes behavior, format, terminology, and task performance. Choosing the wrong tool creates cost, latency, and quality issues. Choosing both without evaluation creates a system that looks sophisticated but cannot be trusted.

How Vertex Builds It

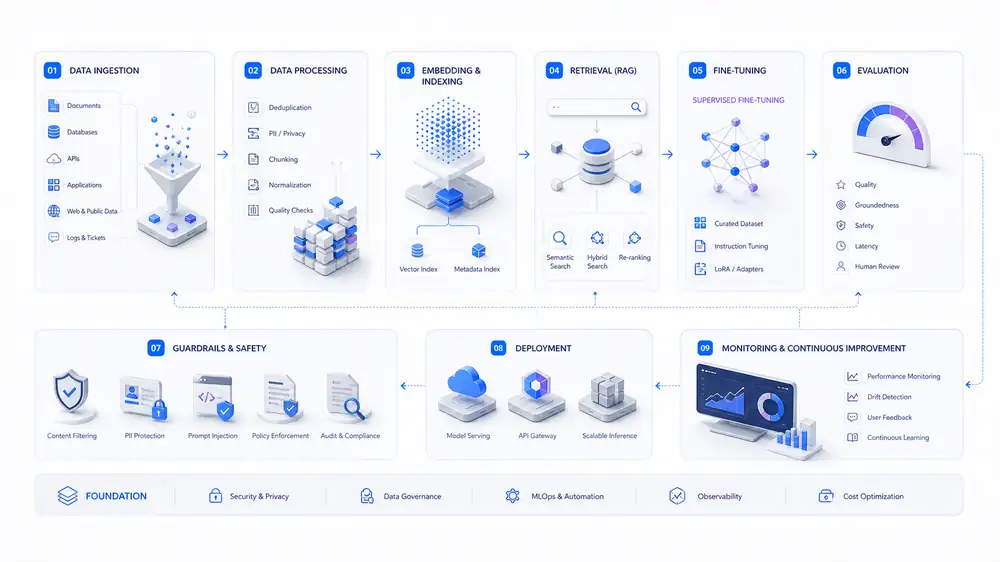

Vertex begins with the target task and answer standard. We define what good output means, what sources are authoritative, what failure modes are unacceptable, and what human review is required. Then we design the data pipeline: ingestion, cleaning, chunking, embeddings, metadata, access control, and retrieval ranking.

For fine-tuning, we assess whether training data is sufficient, whether the improvement can be measured, and whether a smaller or distilled model would reduce cost. We build evaluation sets before tuning so quality changes can be measured against real business tasks, not anecdotes.

Where It Fits

Internal knowledge assistants grounded in policies, manuals, tickets, and technical documents.

Customer support copilots that cite approved sources and follow brand tone.

Document analysis workflows for contracts, reports, claims, or compliance evidence.

Domain-specific agents that produce structured outputs for downstream systems.

Architecture & Delivery Flow

The delivery flow is intentionally practical: validate the business case, identify the riskiest technical assumptions, build the smallest useful production path, and then harden the operating model so the system can be owned after launch.

Expected Outcomes

Higher answer relevance through stronger retrieval and source grounding.

Lower hallucination risk through citations, evals, and guardrails.

Better domain fit through supervised fine-tuning where it is justified.

Lower inference cost through model selection, routing, caching, and distillation.

Faster iteration because every prompt, model, and retrieval change can be measured.

Frequently Asked Questions

Do we need fine-tuning or RAG?

Often RAG comes first. Fine-tuning is useful when the model needs a consistent behavior, style, format, or domain skill that prompting and retrieval cannot reliably produce.

How do you reduce hallucinations?

We combine source-grounded retrieval, answer constraints, citations, automated evaluations, fallback behavior, and human review for high-risk workflows.

Can private enterprise data stay protected?

Yes. The architecture can enforce document permissions, separate indexes, secure model access, logging controls, and provider choices aligned to your privacy requirements.

How is success measured?

We define task-specific evaluation sets with expected answers, citation expectations, format checks, and failure categories before production rollout.