The Problem This Solves

Many cloud programs begin with speed and end with fragmentation. Teams add accounts, clusters, services, and automation before the operating model is clear. Costs rise, observability is inconsistent, security exceptions multiply, and latency-sensitive workloads still sit too far from the users, machines, or sensors that depend on them.

Edge computing adds another layer of complexity. Industrial sites, logistics networks, healthcare devices, and AI inference endpoints need local processing, resilience during connectivity gaps, and a clear way to synchronize with central cloud services. Without a disciplined architecture, edge becomes a collection of fragile exceptions instead of a managed extension of the platform.

How Vertex Builds It

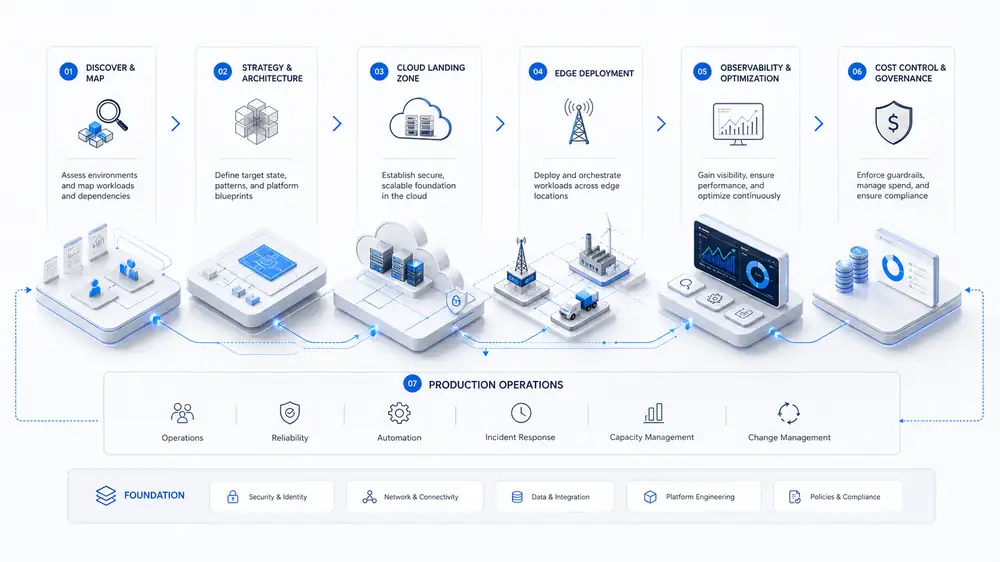

Vertex starts by mapping workloads to business constraints: latency, compliance, data gravity, availability, operational ownership, and expected growth. From there, we define the landing zone, network model, identity boundaries, deployment topology, data movement strategy, and observability baseline.

We then turn the architecture into a production path. That includes infrastructure-as-code, CI/CD integration, policy guardrails, cost tagging, resilience testing, edge update strategy, and runbooks for the teams who will own the system after launch. The result is a platform that developers can use without bypassing security or operations.

Where It Fits

Hybrid cloud landing zones for regulated or multi-region businesses.

Edge inference for manufacturing, logistics, retail, and field operations.

Cloud cost optimization for AI, data, and container workloads.

Migration from manually managed infrastructure to repeatable platform patterns.

Architecture & Delivery Flow

The delivery flow is intentionally practical: validate the business case, identify the riskiest technical assumptions, build the smallest useful production path, and then harden the operating model so the system can be owned after launch.

Expected Outcomes

Lower latency for user, device, and machine-facing workloads.

Clearer cost ownership through tagging, budgeting, and workload placement.

Reduced operational risk through standard landing zones and deployment paths.

Better resilience through regional failover, edge continuity, and tested recovery plans.

Faster engineering delivery because teams inherit approved platform patterns.

Frequently Asked Questions

When should a workload move to the edge instead of staying in the cloud?

Edge is most useful when latency, connectivity, data volume, privacy, or local autonomy materially changes the business outcome. Vertex evaluates those constraints before recommending edge infrastructure.

Can Vertex work with our existing cloud provider?

Yes. The service is provider-aware rather than provider-prescriptive. We work across AWS, Azure, and GCP and can design hybrid patterns around your existing commitments.

Does this include cloud cost optimization?

Yes. Cost ownership, workload placement, rightsizing, tagging, and usage visibility are part of the architecture, not a separate cleanup activity.

How do you keep edge deployments manageable?

We standardize deployment, configuration, observability, update workflows, and fallback behavior so edge nodes behave like managed platform extensions.